ROBOTIC AND MIXED REALITY

with

Prof. Roland Snooks & Natalie Alima

CONTEXT:

The workshop was an attempt to integrate augmented reality with real time robotics to test a new methodology of fabrication. As parametric designing start outputting more complex design, the present workflows of digital fabrication seem woefully inept in tackling the challenges posed by them. This has created a need for to integrate new systems, technology and methodology to help overcome these challenges.

Mixed reality systems, namely Virtual Reality(V.R.) and Augmented Reality(A.R.) systems have seen renewed interest in recent times, developing new environments and visualization protocols that not only mix real and digital environments but help interact with them. Since much on architecture workflows is built upon visualization, it was only a matter of time before it was introduced to an architectural workflow.

Despite the many challenges the technology holds a lot of promise in identifying and positioning complex forms in space and helping visualize the final product as a whole thereby simplifying the fabrication process.

PROCESS:

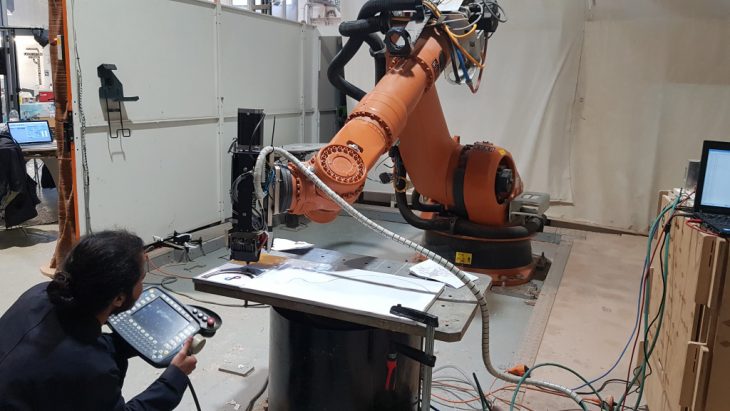

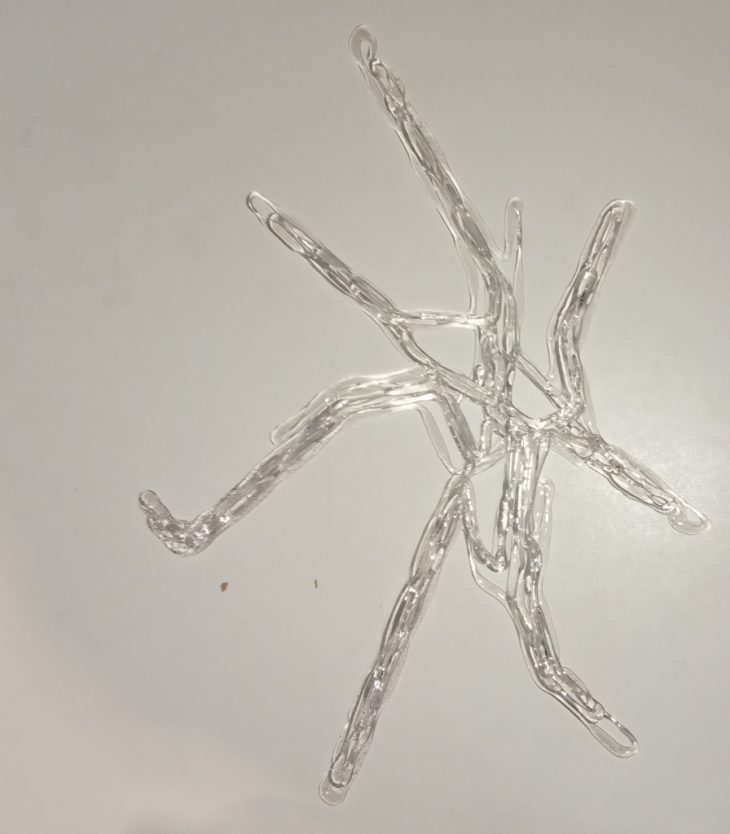

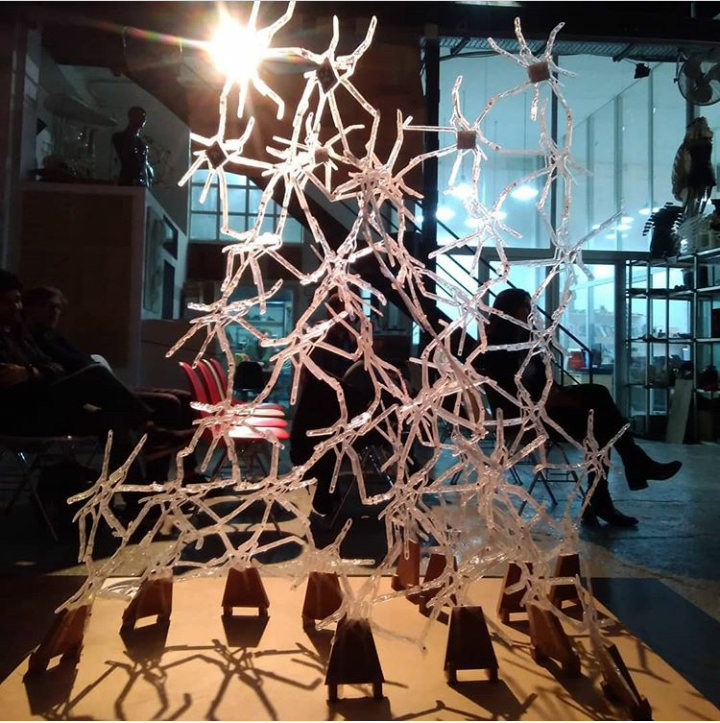

The workshop was used Hololens, an augmented reality tool to help visualize and fabricate a self organizing generative model using a Kuka robotic arm with real time feedback. The material used was PET pellets printed using an extruder fitted on the robotic arm.

TEAMS:

Due to the complexity of the process, the group was divided into 4 teams.

- DESIGN

- REINFORCEMENT

- FABRICATION

- REAL TIME FEEDBACK

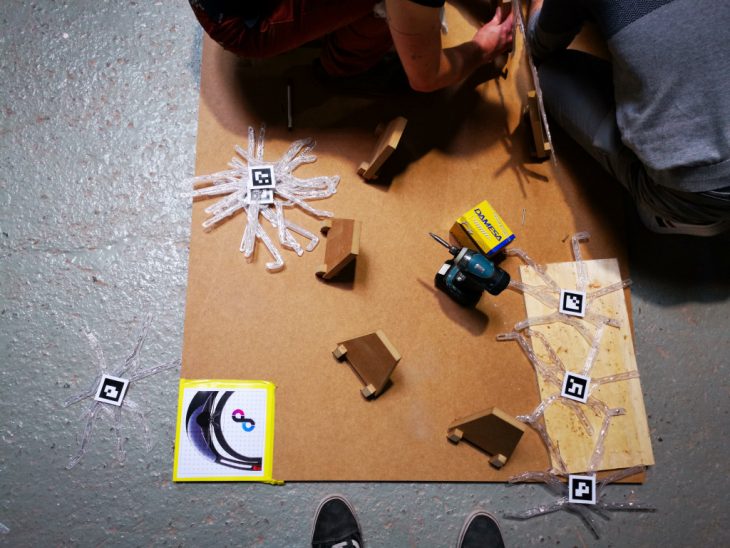

The design team was tasked with unraveling and printing the components of the agent body design on a flat surface, which was then tagged with Aruco markers and assembled by the fabrication team. The reinforcement team was tasked with printing along the surface of the assembled body to strengthen key stress points along the structure. The feedback team was to develop a system to print the reinforcement on the model with the help of a sensor that would scan the surface and update the position of the print nozzle using a real time feedback mechanism.

REINFORCEMENT TEAM.

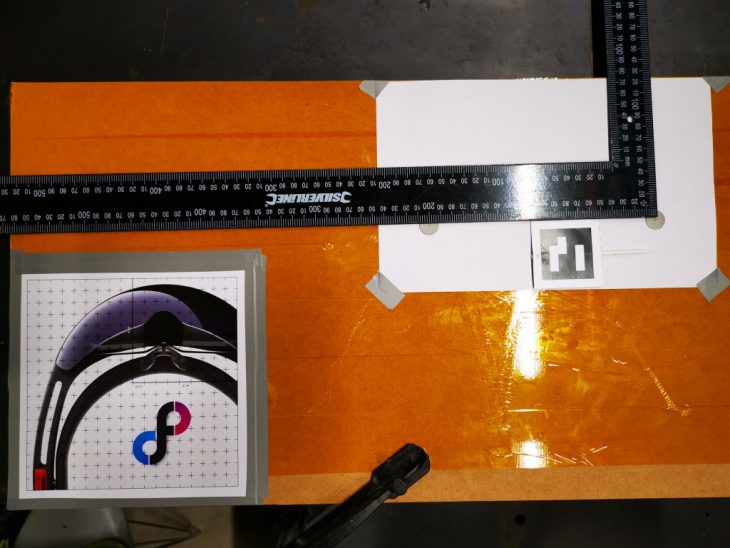

As part of the reinforcement team we were tasked with generating a toolpath for the robot to reinforce the geometry by printing along the stress lines of the model. This would ensure structural stability. Owing to the complicated nature of the geometry, the Hololens was used to help visualize and identify the path along which the robot would print. An Aruco marker was positioned on the points along the path and using the Hololens the points were tagged and feed into the grasshopper simulation. These points then became a polyline which became the toolpath for the extruder to move along.

The key challenges the team had to overcome to print were:

- Ensuring no error between the point tagged by the Aruco marker into the virtual space and the physical spcae.

- Localize the model and the space into the robot environment.

- Ensuring sufficient points to translate into a smooth curve for the robot to print.

- ensuring the 3d dimensional path generated was collision free.

TESTS:

2D SURFACE TEST: The first test involved check the data inputted by the Hololens and the grasshopper environment. A large board of known dimensions was taken and random points were marked over it. These points were mapped by their distances from each other and the edge of the board. An Aruco marker flag was placed on each of these points and tagged using a hololens. The hololens plugin for grasshopper, Fologram, converted these tags into points within the Rhino model space. These points were mapped with near perfect accuracy into the model space thus reaffirming the ability of hololens to map the points accurately. These tagged points were fed into the Kuka Prc to create the robotic toolpath for the extruder to print on.

3D SURFACE TEST: With the success of the 2d test, a more comprehensive 3d surface was generated with points marked on the space and the same test was repeated to check for the smooth printing on a 3d surface. This test was mainly configured to check for collisions and the smooth movement of the robotic arm.

FABRICATION:

Much of the challenges faced in this project was faced by the fabrication team of which i was later a part of. The fabrication team identified each unique component and tagged it with an Aruco marker. this aruco marker tagged the components and helped identify the component in space. the hololens was then used to visualize the model in the built environment.

The rhino model of the entire structure was localized onto its physical space using hololens and an Aruco marker, thereby creating a visual reference of the model to help the fabrication team locate the components and build them. The process involved a simgle person wearing the Hololens and directing the remainder of the team to the positions of each component. the fabrication team the drilled into the arms of the component and riveted them together creating the model part by part.

CHALLENGES & POSSIBLE SOLUTIONS:

The project faced numerous challenges in its implementation.

The inconsistency in heating the pellets in the extruder created components that were brittle and with little to no flexibility. this was partially due to the poor readings given by a wrongly positioned thermistor. This could be improved upon by positioning a better thermistor in the extruder that gave consistent reading.

Much of the time consumed was during the fabrication process. With only one person wearing the hololens it became impossible for the rest of the team to visualize the structure as a whole thereby limiting work to single 3 man team. an alternate visualization method that allows for the entire team to see the position and location of the component would immensely help easing the confusion generated by the directors unusually complicated attempts to verbally communicate the position of the component and space and how to fix it.

Moreover, it would have been easier to localize the components in the model and print the components in batches that belong to a neighborhood or a sub group that would prevent the time lost due to waiting of a specific competent not being printed yet.

CONCLUSION:

Despite its challenges, the workflow throws out some interesting strategies that can help further digital fabrication strategies. The use of mixed reality can only benefit the construction industry.

ROBOTIC AND MIXED REALITY is a workshop conducted of IaaC, Institute for Advanced Architecture of Catalonia developed at Master in Robotics and Advanced Construction (M.R.A.C.) in 2018 by,

Students: Sujay Kumarji

Faculty: Prof. Roland Snooks, Natalie Alima, Kunaljit Chadha