SL & ANN model comparison of radius for kerf-bending

ABSTRACT:

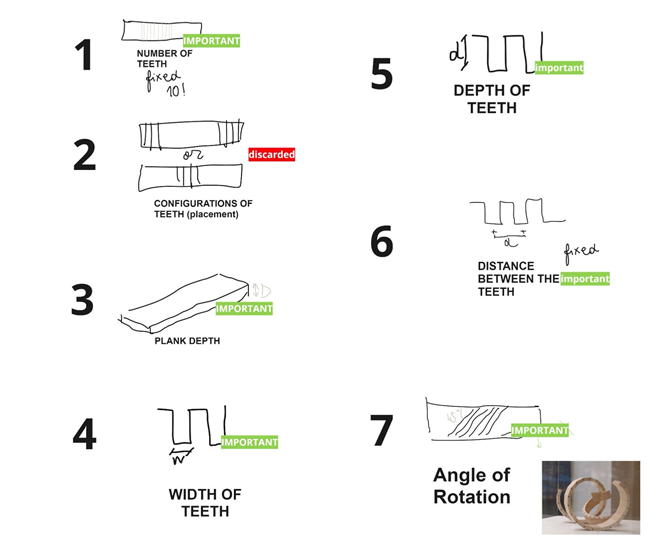

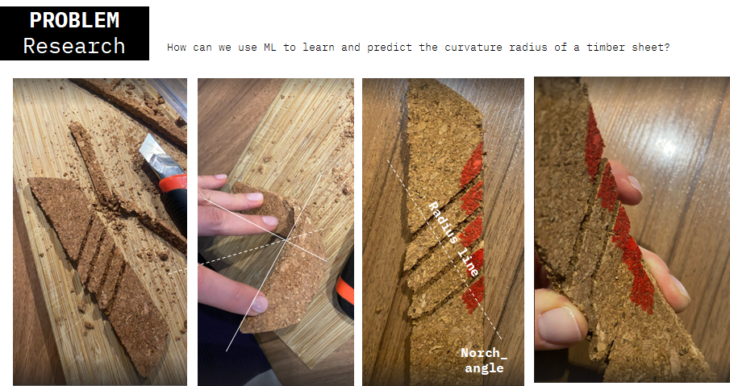

The machine learning process plays an increasingly important role in geometrical optimization. The established craft processes become robotic and require process revision for further automation. This study aims to predict the wooden panel bending Radius based on input dimensions of notch angle, width, depth, distance, and panel folding angle. The number of notches we used was fixed, and the wooden panel had only one folding element.

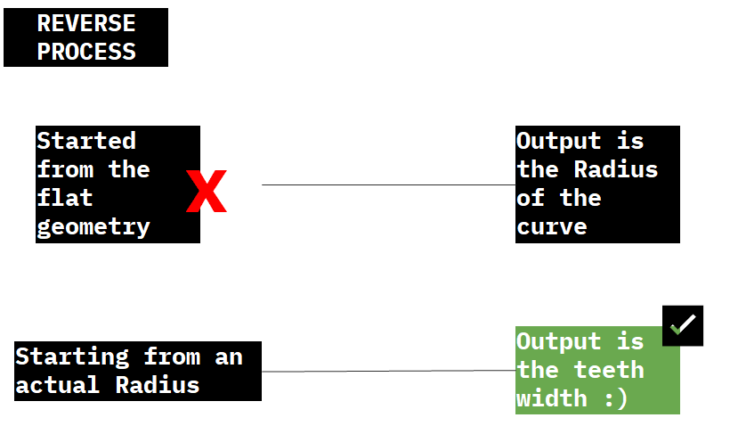

To create a relevant dataset, we used the reverse modeling process in grasshopper. The bending radius and several other parameters were our input data, and we got some notch elements data as an output. Using the Shallow learning and Artificial Neural Network regression models, we tried to predict the bending radius from the rest of the data.

The result suggested two models – SL non-linear regression and ANN regression, that gave us the best results for curvature prediction. We conclude the forgoing models to be relevant for more complex wooden panel curvatures and similar geometric problems.

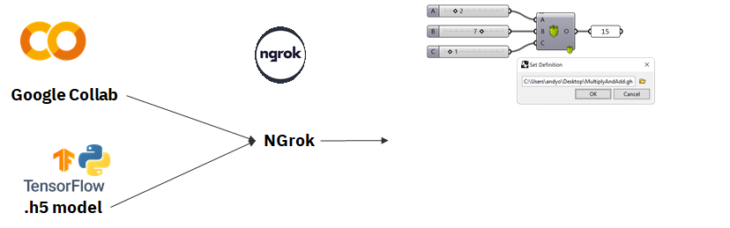

The workflow that we’re using aims to generate trained models that will eventually help us visualize the results and compare them to the original real-life kerfs. For that, we used Google-Collab as a coding platform along with the Hops technology that allows us to integrate the numerical outputs inside Grasshopper in order to show and assess the accuracy of the predicted geometries.

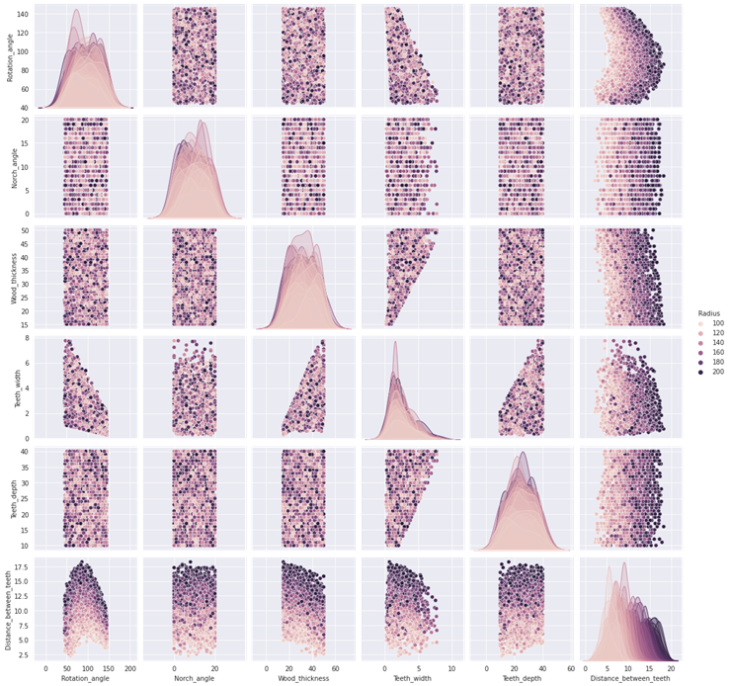

Our dataset creation consisted of 2000 values equally distributed across all the features.

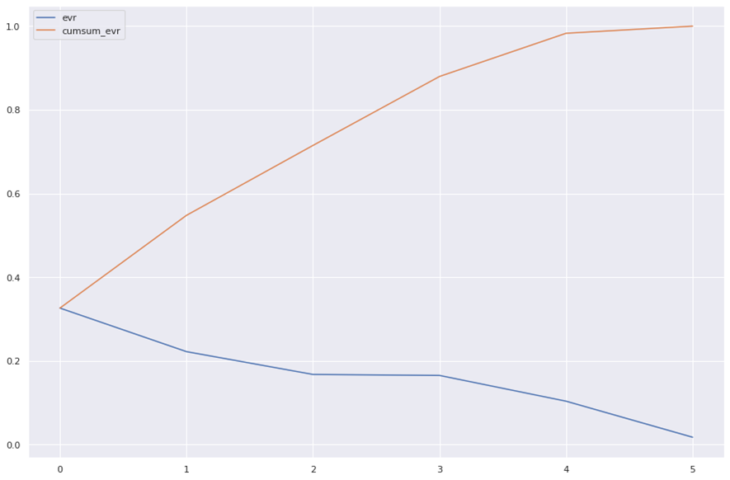

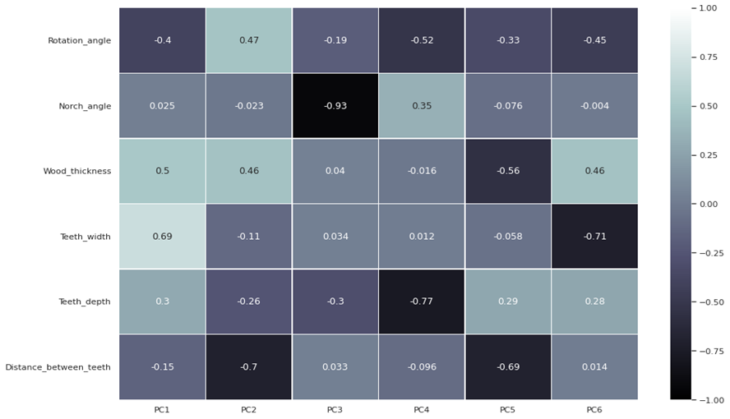

Principal Component Analysis (radius range max=200)

After learning from the previous versions of dataset creation we found that the range of 0 to 200mm was the best range to have accurate predictions

PCA showed that all the features were contributing to the target feature.

For our project shallow learning performed better than ANN. All the input features in X were scaled using standard scaler from Sci-Kit Learn.

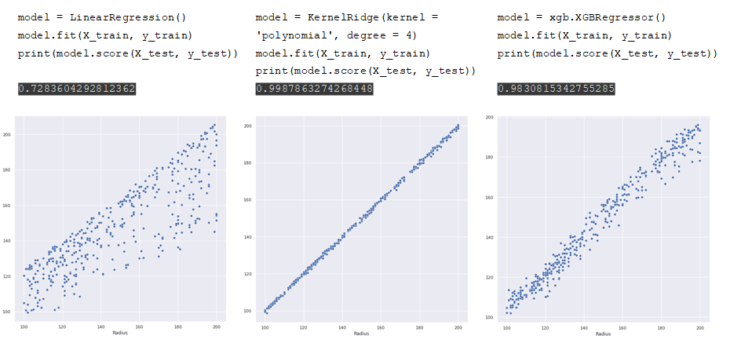

Shallow Learning Regression- LinearRegression, KernekRidge, XGBRegressor

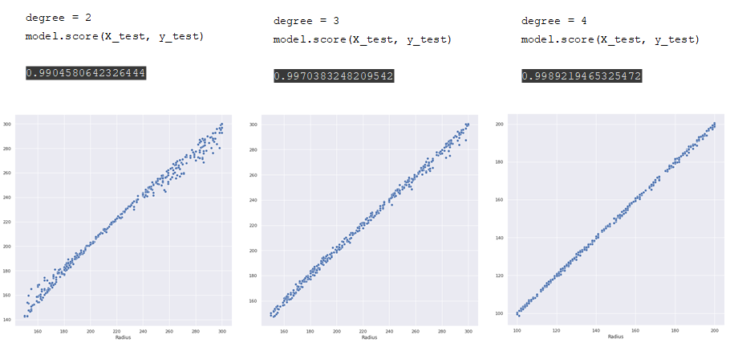

The best model was polynomial regressor. Degree=4 gave us the best results compared to degree=2 and degree=3.

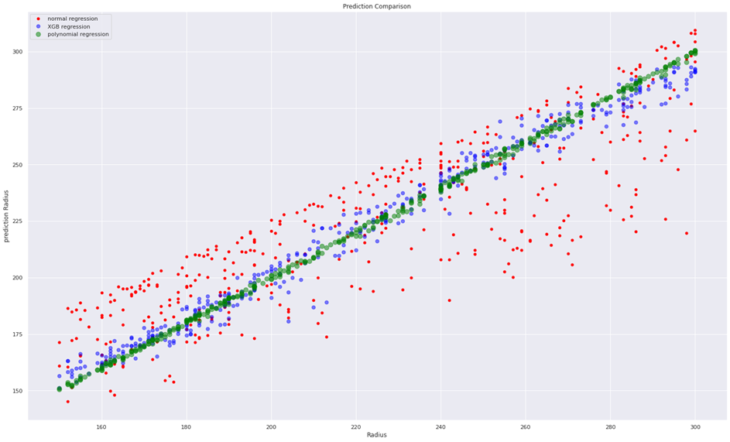

A final comparison between normal regression, XGB regression and polynomial regression showed polynomial regression performing better.

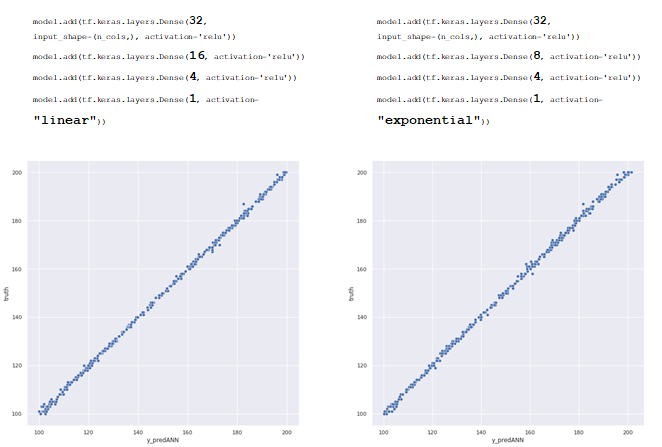

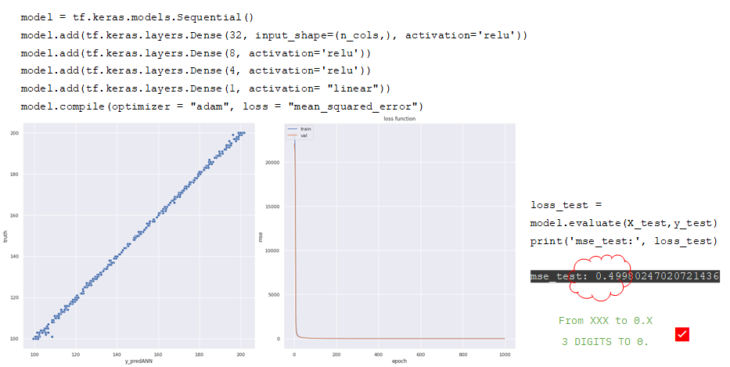

We tried different ANN regression models with different architecture for layers and the model with 224 param and linear activation performed well.

Our dataset worked well for ANN regression model as well, giving us 0.49 of mean squared error.

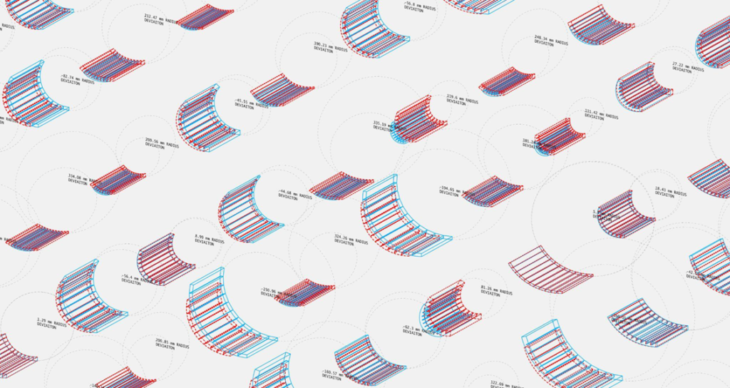

For our final comparison we took ANN and SL regression model to grasshopper using hops to compare the differences visually.

HOPS workflow

For the live connection of our machine learning model with grasshopper we used gooogle colab to run the .h5model using dependency of ngrok library.

The GIF below shows the deviation between the real and the predicted model for ANN regression.

GIF showing model comparison for SL and ANN regression

CONCLUSION

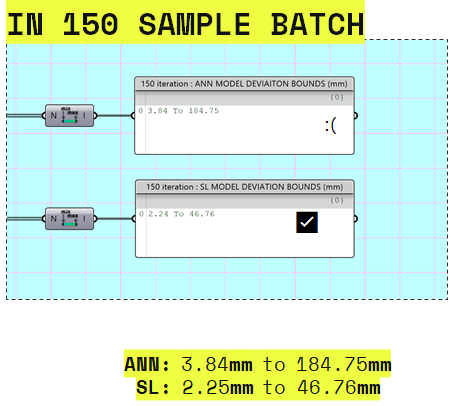

In the we compared predicted SL and ANN models simultaneously with the real model and recorded the deviation for 150 iteration in grasshopper and after calculating the bounds of the deviation for both models, we found SL was performing best by giving us less range for deviation.

Machine Learning_for_Kerf Bending is a project of IAAC, Institute for Advanced Architecture of Catalonia developed at MaCAD (Masters in Advanced Computation for Architecture & Design) in 2022 by Students: Mahmoud Ramdane, Naitik Sharma & Olga Poletkina and faculty: Gabriella Rossi and assistant faculty: Hesham Shawqy