FluxSight

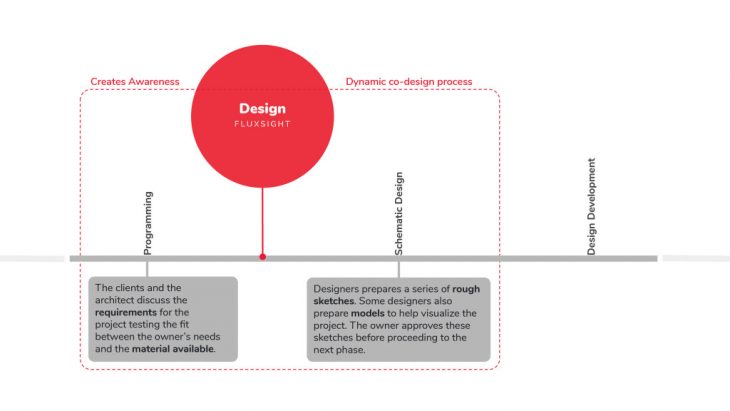

FluxSight is a design tool in augmented reality that helps architects and designers to create objects in a wide variety of scales using reclaimed resources. This process explores freeform modelling from hand tracking and gesture detection in the early design stage, taking into account resources availability and combining it with performance criteria like solar radiation. The main objective of this tool is to promote and create awareness of the importance of material reusability, potentially reducing the environmental and economical impact of our constructed environment.

This tool is intended to be use by experts in the industry of AEC, with a strong focus on designers. Further explorations will investigate the possibility to expand to non-experts and general public, engaging in co-design processes.

Workflow

Workflow

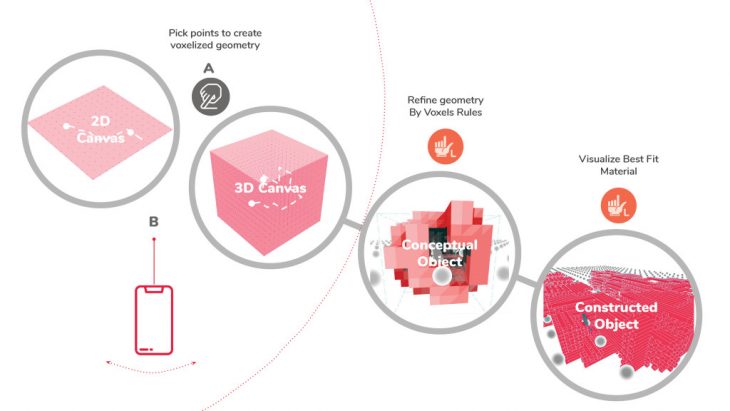

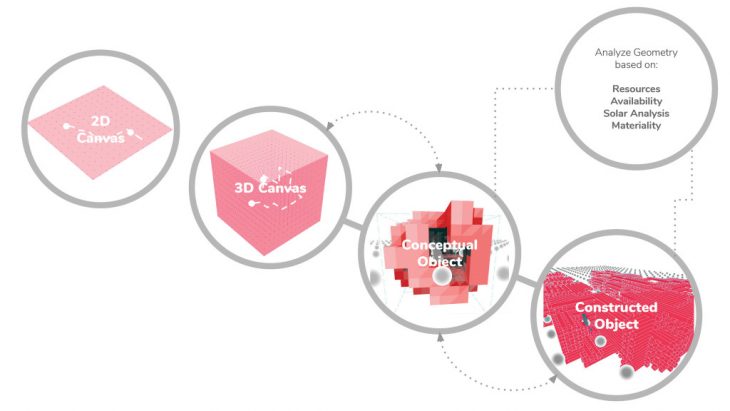

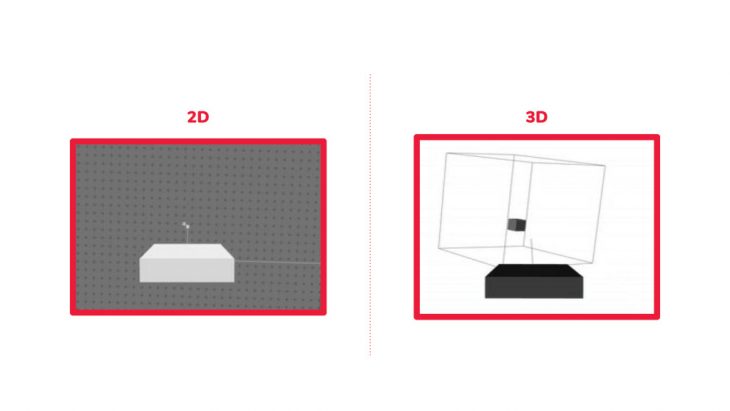

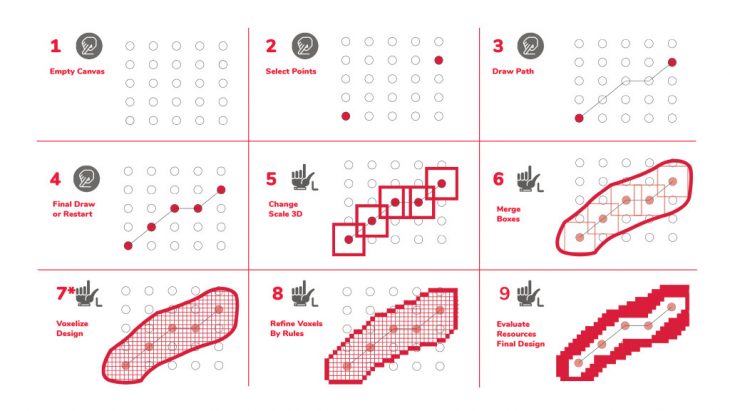

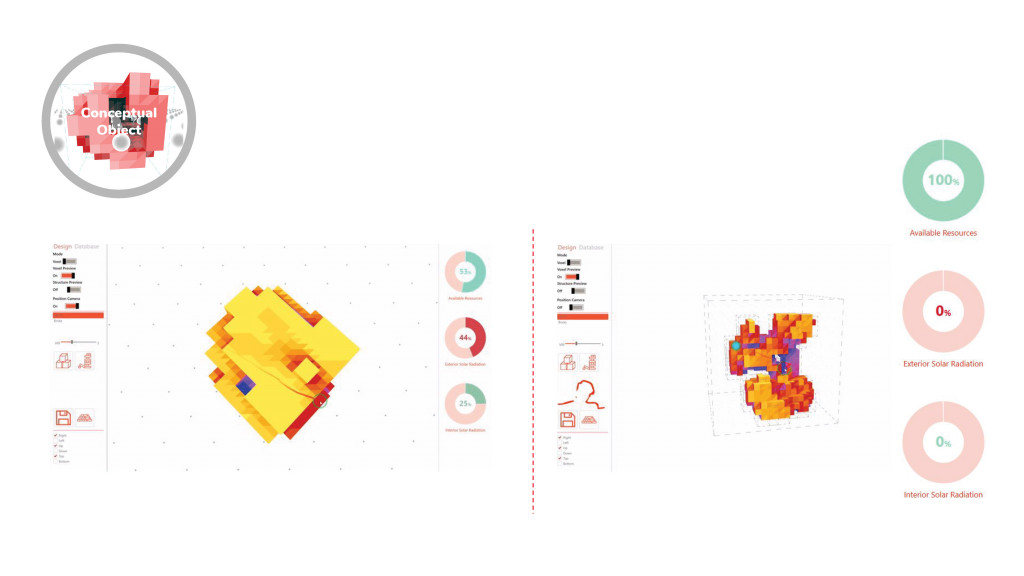

The project is divided in three phases: a 2D and 3D canvas for freeform modelling by picking points from hand tracking and smartphone position tracking, a conceptual object generated by basic shapes in the previous step and the visualization of the constructed object with the selected materiality. (A more detailed explanation of each step can be found at the end of this entry).

Ideal Outcome

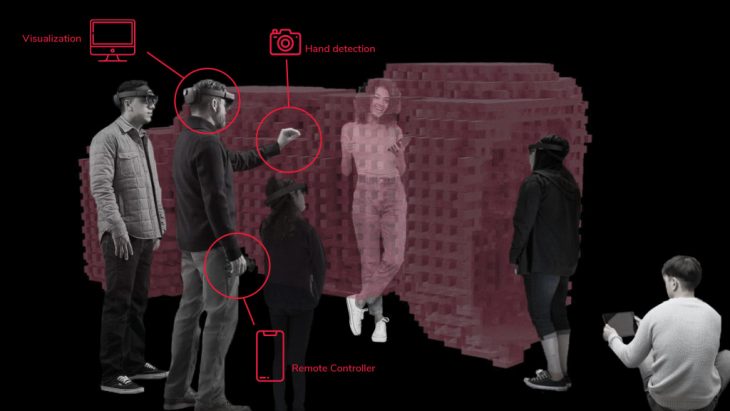

The ideal set up to use the tool would be in an Augmented Reality Environment. The designer would have the control of the design and the clients would interact with it through other augmented reality glasses or their own devices using and application.

Augmented Reality Glasses

Augmented Reality | Tablet Version

Non Augmented Reality Version

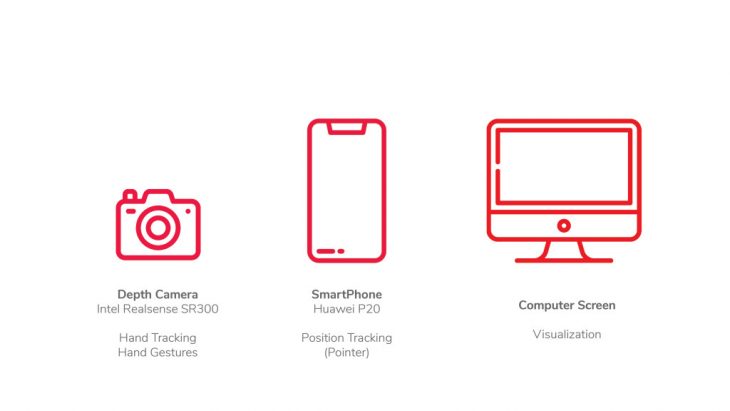

Due to the inaccessibility to the required tools during the development of this research a non-Augmented reality version has been used: the computer screen allows for design visualization; a camera is implemented to hand track the gestures; and the pone works as the remote controller.

References

mTable 2002

mTable 2002 is an application developed by professor Gramazio and Kohler from ETH Zurich. The application enables people to create their own tables design with a phone application. From this tool we find interesting how it guides the user to achieve a design that can be manufacture.

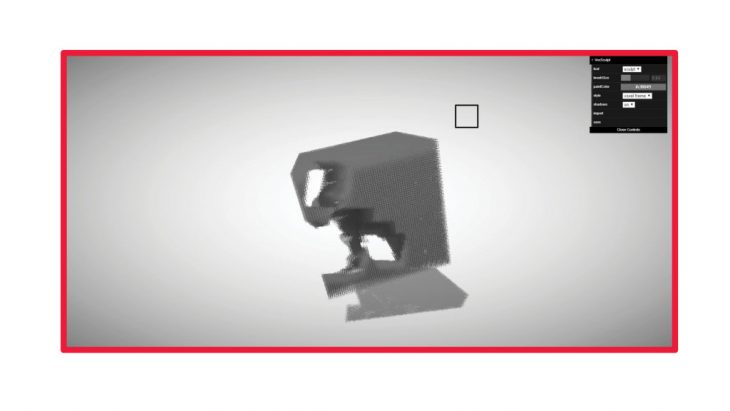

Sculpt with voxels

In this second example the interest was in the display of the design space. It works like a cube of clay that you subtract and add material.

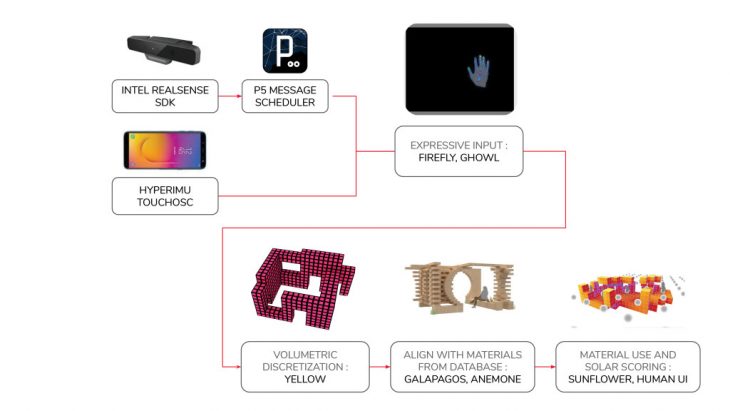

Hardware

Technologies

Technology Overview. Click here to see technical details:

https://github.com/MRAC-IAAC/fluxsight

Selection by phone

Phone drawing implementation in 2D and 3D canvas using Rhinoceros environment

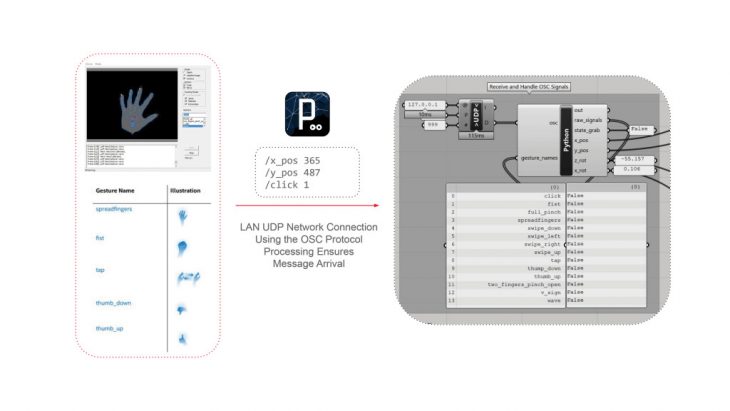

Basic Gestures Control

Hand Gesture Detection using Realsense technology inside Grasshopper.

Proof of concept

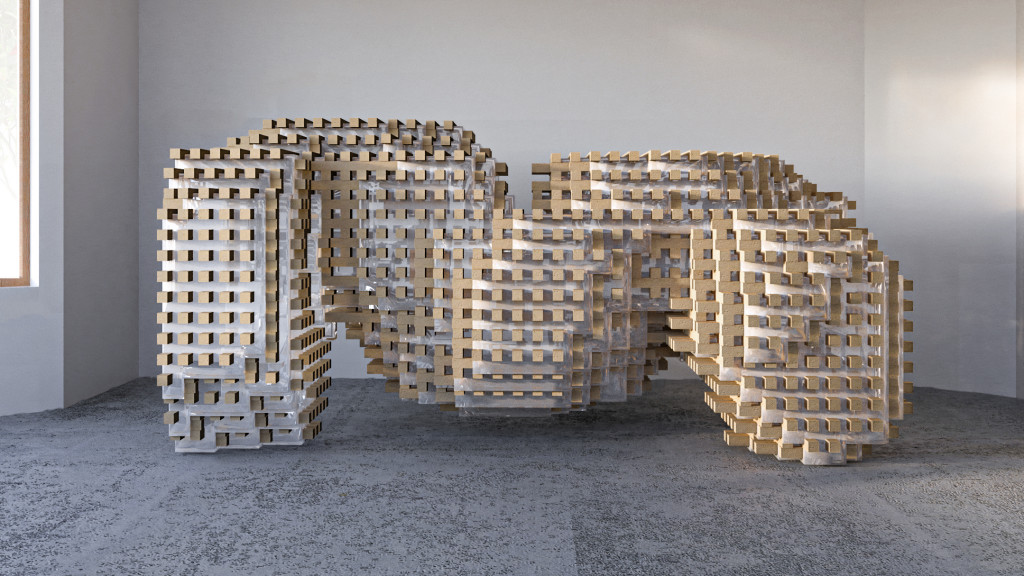

Design Explorations

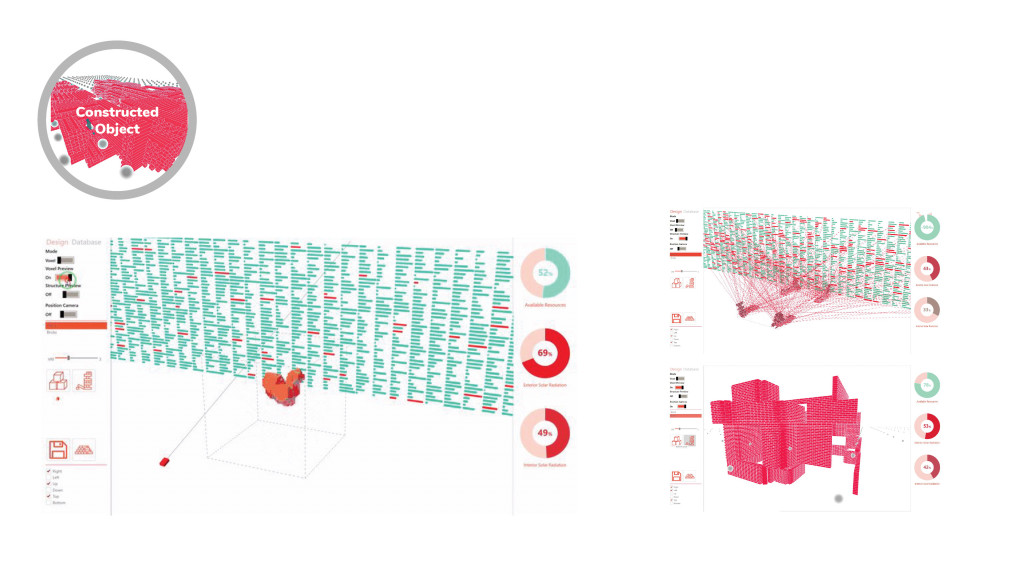

Design explorations | Material Output

Computational Workflow

User Interface | control options

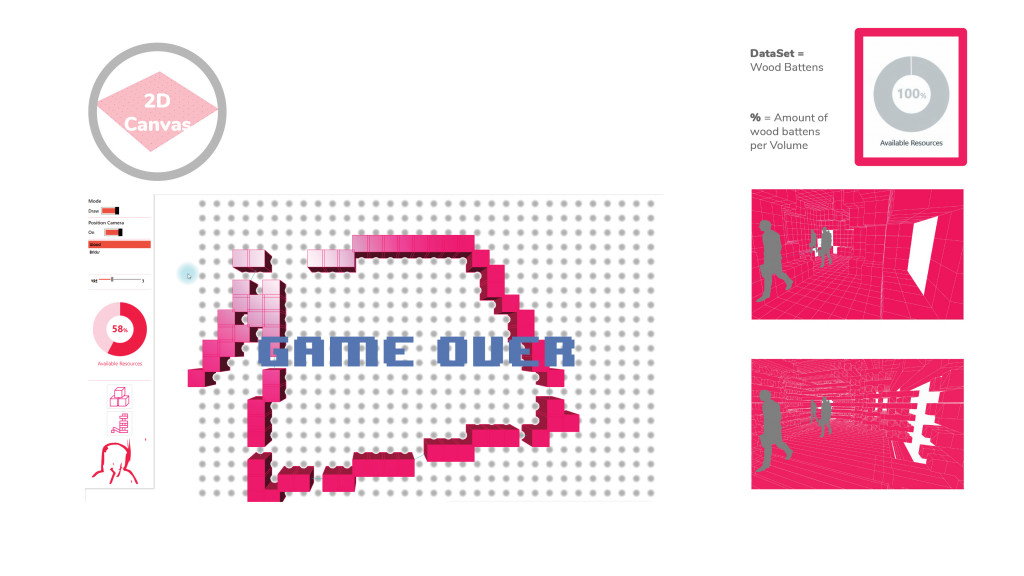

2D canvas | Gamification

The user inputs are points in a 2D grid, this points define the volume that gets voxilized in further steps and can be controlled by hand tracking and gesture control. The previewed geometry color changes based on the resources availability, in this case a limited amount of wood battens to build a structure. A “GAME OVER” sign appears every time the user exceeds the quantity of material available.

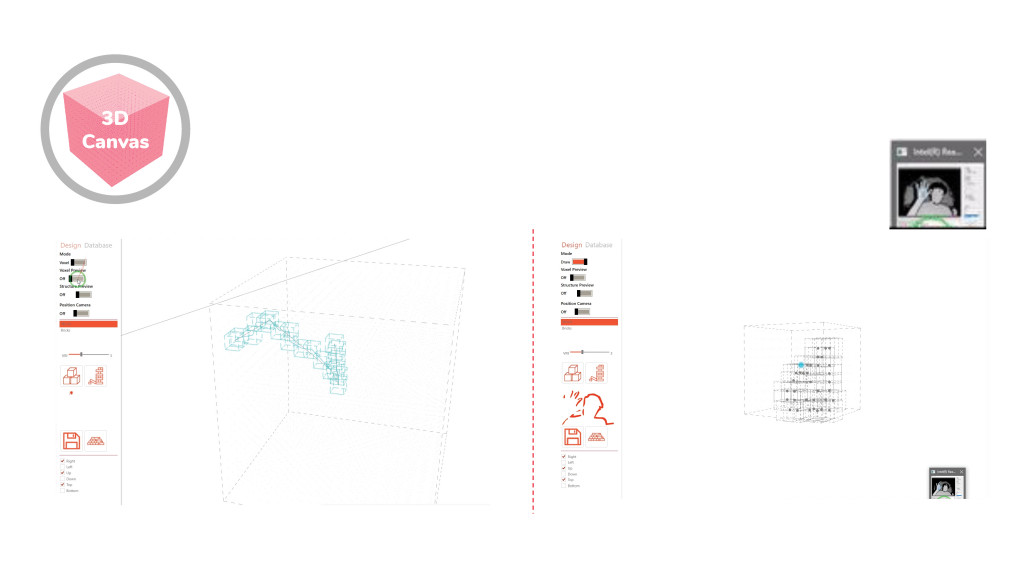

3D canvas

Further experimentation was done in a 3D canvas with the same principles applied on the 2D canvas. In this example the selection of points varies on spatial planes with a simple “OK” hand gesture.

Conceptual Object

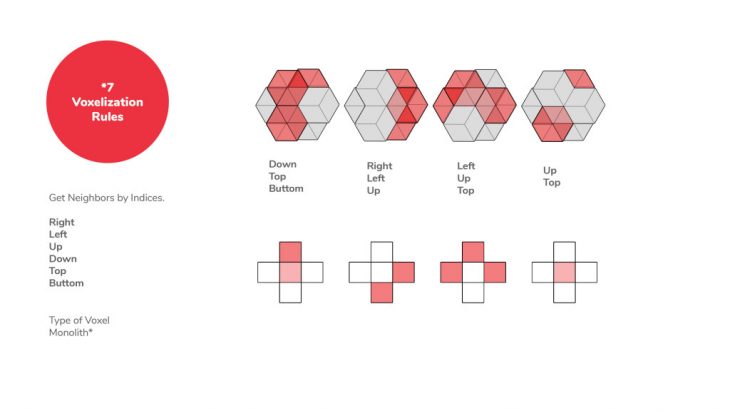

This phase is considered iterative as allows the user to constantly change and refit the shape with the voxelization rules and based on the performance charts.

Constructed Object

Finally in this phase the user can visualize the materials available on his/her design and can go back to previous steps in an interactive way to optimized the amount of reclaimed material fitted on the object.

Examples of objects created with the tool

FluxSight is a project of IAAC, Institute for Advanced Architecture of Catalonia developed in the Master in Robotics and Advanced Construction 2019/2020 by: Students: Anna Batallé, Irem Yagmur Cebeci, Matthew Gordon, Roberto Vargas Faculty: Angel Muñoz Assistant: Agustina Pallazzo