CLAY ENGRAVING // WORKSHOP 3.2

This workshop introduced students to XR development and Robotics using the Unity3D Game Engine. The workshop gave students a quick course in Unity through highly focused technical lectures and exercises. Each course had specific learning outputs, which build upon each other towards the ability to develop an interactive architectural scenario which incorporates real-time robotic interaction. Students learnt:

- Basic modeling, rendering, and animation skills in the Unity3D Game Engine

- Specific workflows for authoring VR content in Unity

- How to calibrate relationships between virtual and real objects

- Author and prototype robotic workflows and simulations in XR

- To think independently about novel robotic workflow concepts and implement them

Concept

The human body fosters a wealth of tacit knowledge, especially in master-builders. The precious knowledge bred by these masters’ bodies is a precious repository of profound skills and embodied know-how. Following the development of robotic fabrication, we believe that recording those nonstructural data via sensing to shift to robotic fabrication can challenge more complicated application and preserve the lost of craftsmanship

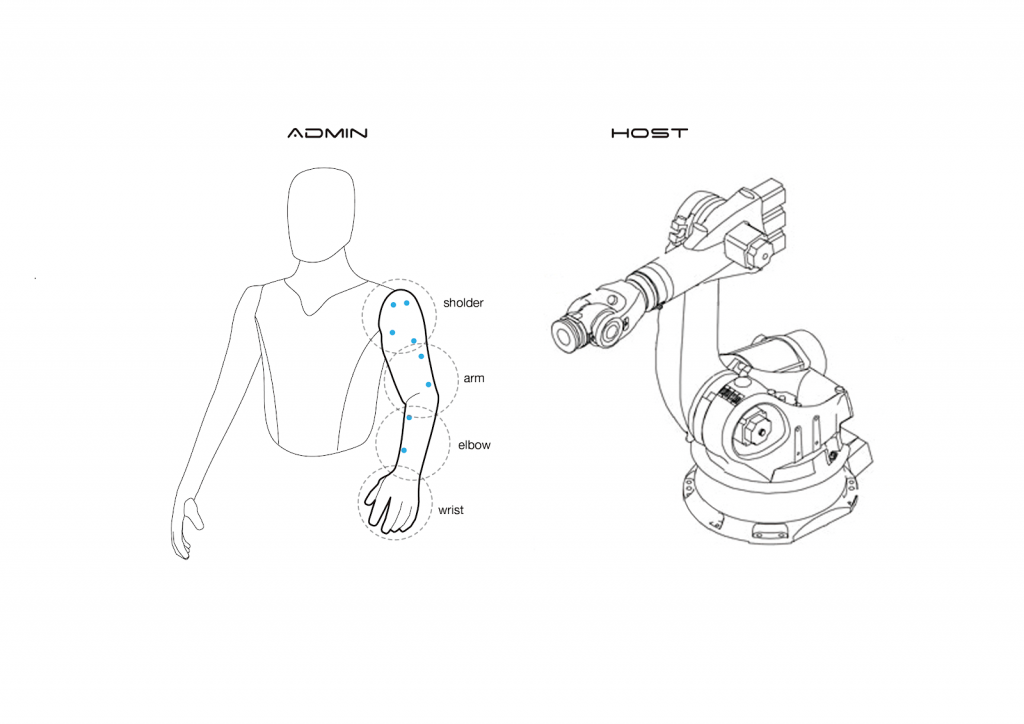

In previous project(Hardware II) we transferred human motion to another human through Exo-arm using EMG sensor, and IMUs.

In this project, we used the base concept to control the robotic arm using human motion along with HTV VIVE tracker. Unity is used in this project to create the virtual environment and control robot through virtual environment. Virtual environment creates a engaging experience and as a result artist can be more expressing in his/her art.

Setup

The setup used:

- Unity

- ROS Sharp

- ROS

- HTV VIVE VR With trackers and trackers

- UR-10e

Process

We used Unity 2019 to create the virtual environment. Custom created C-Sharp scripts with unity is used as the trackers is moved. Vive tracker mounted on the drawing tool. Clay panel of size 400×400 mm was used in the robot working area. As the artist moves the tool, tool path is generated.

As the tool path is generated the robot follows the pattern of the tool path and engraves on the clay panel in real-time.

Environment

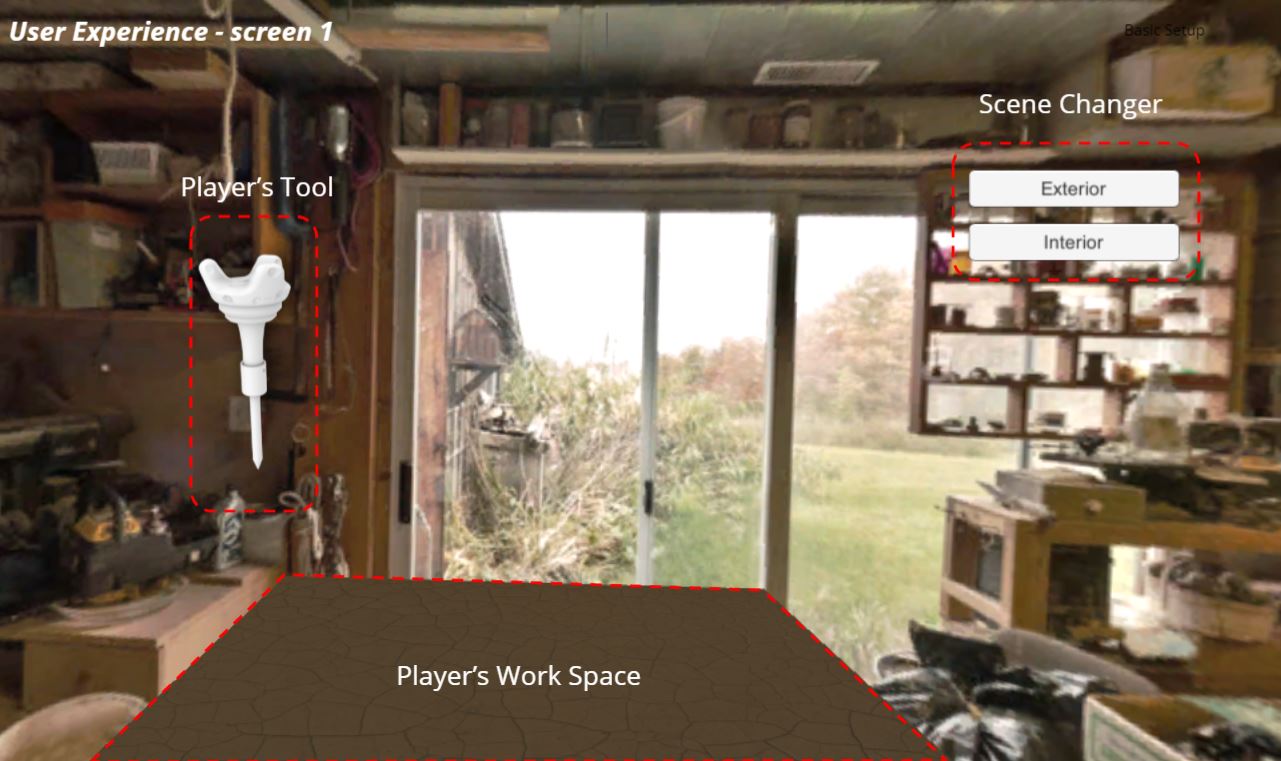

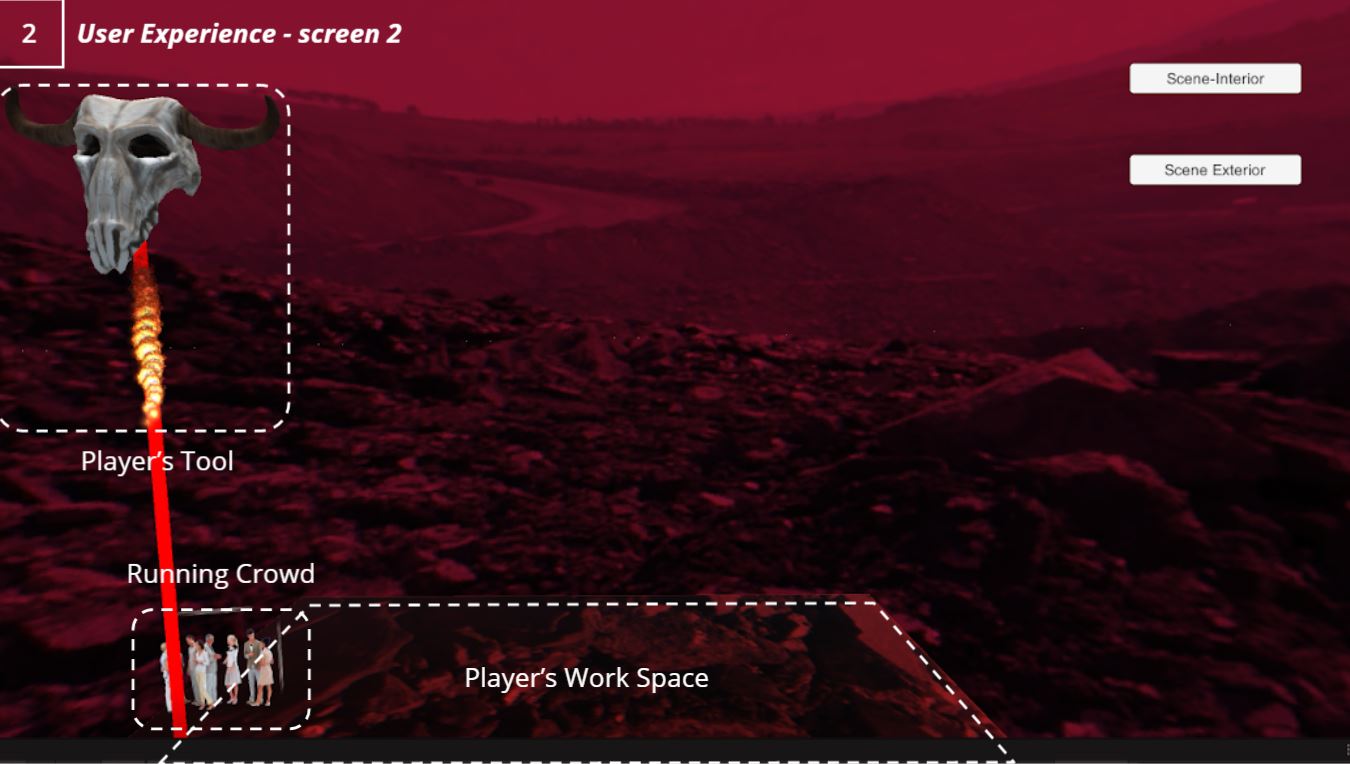

Virtual environment has 2 screens.

- Interior Scene – Soothing environment with calm music

- Exterior Scene – Aggressive environment and engaging music for emotional involvement of the artist/user

It starts with a interior calm scene starts inside a workshop. The user has option to switch anytime from interior to exterior. Both scenes have different design of tool. Interior scene tools has a normal design with a pen mounted similar to the artist holding the tool with pen. Whereas exterior scene has a skull tool with a fire beam ray cast which the artist uses on a ground plane which deforms as the user presses the tool on the table in real life. to have much better better gaming experience the exterior scene has human crowd which tries to escape the fire beam. with this approach user interaction increases.

Scene1 – Interior Scene

Scene – Exterior Scene

Tool Design

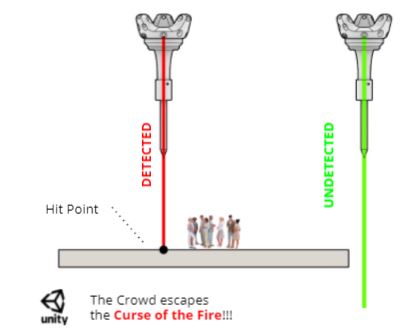

Tool is a handheld 3d printed part with Vive tracker mounted on top.

In virtual environment the tool is assigned to a c sharp ray cast script. it throws a ray in the forward direction parallel to the tool tip. It detects the tool tip distance from the surface near the tool tip. with the distance detection logic the location of tool tip is known at all times and the deformation of plane is been scripted using the tool tip location.

Results

CLAY ENGRAVING // WORKSHOP 3.2 is a project of IAAC, Institute for Advanced Architecture of Catalonia developed at Master in Robotics and Advanced Construction Workshop seminar in 2020/2021 by:

Students: Shahar Abelson, Charng Shin Chen, Arpan Mathe

Faculty: Ryan Luke Johns, Jeffrey Anderson

Faculty Assistant: Daniil Koshelyuk, Soroush Garivani